In this blog post, we will provide an introduction to vector databases. We will discuss the different types of vector representations, and we will explain how vector databases work. We will also discuss some of the benefits of using vector databases.

What are Vector Representations?

Vector representations serve as a bridge, translating various forms of data - such as text, images, and audio - into numeric form. This conversion facilitates the encapsulation of meaning, characteristics, or features from the original data, making it more accessible for computational processing and analysis.

Several types of vector representations are commonly used, each with its unique advantages and applications. Here’s a closer look at some of these:

-

Word Embeddings: These are vector representations specifically designed for words, capturing their semantic meanings. These embeddings are often derived from extensive text corpora, leveraging machine learning techniques to represent the nuanced relationships between words. Word embeddings find wide-ranging applications across numerous tasks, including text classification, machine translation, and question answering.

-

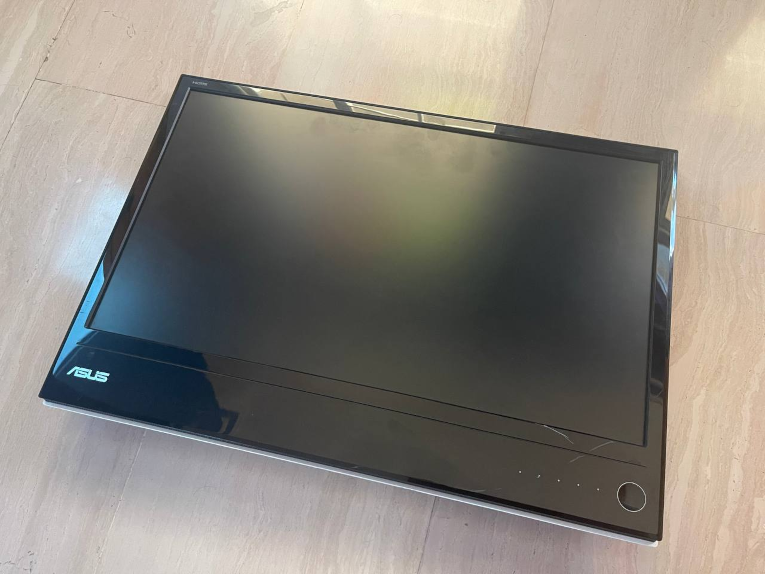

Image Embeddings: Just as words can be translated into numeric form, images can be transformed into vector representations as well. Image embeddings distill visual content into a format that machines can understand and process. These embeddings, generally learned from large image datasets, can represent the content of images, enabling tasks like image classification, image retrieval, and object detection.

-

Audio Embeddings: For audio data, audio embeddings provide a means to capture and represent the distinct characteristics of sound. These vector representations, trained on extensive audio corpora, can encapsulate the content of audio recordings. Applications for audio embeddings are diverse and include speech recognition, speaker identification, and music genre classification.

In essence, vector representations provide a powerful tool to translate various forms of data into a language that machines can understand, process, and learn from, paving the way for a broad spectrum of data analysis and machine learning tasks.

How do Vector Databases Work?

At their core, vector databases are engineered to handle the storage and querying of vector representations of data. They employ a mix of strategies to efficiently manage these vector representations, making it possible to retrieve relevant data quickly and accurately. Let’s delve into some of these techniques:

-

Hierarchical Indexes: Imagine storing and organizing data in a tree-like structure, where each branch leads you closer to the information you’re seeking. That’s essentially what hierarchical indexing does. It allows vector databases to swiftly locate vector representations similar to a given vector, reducing search times and increasing efficiency.

-

Spatial Indexes: Spatial indexing involves using specific data structures, like kd-trees or quadtrees, which are designed to handle multi-dimensional data. This approach allows vector databases to rapidly find vector representations that are in close proximity to a given vector. In other words, it’s like having a map that guides you to the data points that are ‘nearest’ to your location in a multi-dimensional space.

-

Graph Indexes: Graph indexing makes use of graph data structures to store and query vector representations. If you picture your data as a network of interconnected points, then graph indexing helps you find the data points that are directly linked to a given vector. It’s akin to finding friends-of-friends in a social network.

In a nutshell, vector databases utilize these techniques to efficiently navigate the high-dimensional space of vector representations, making it possible to quickly retrieve the data that’s most relevant to your query. This functionality is integral to many machine learning applications and data analysis tasks.

Benefits of Using Vector Databases

There are many benefits to using vector databases. Some of the benefits of using vector databases include:

- Faster Search: Vector databases can be used to quickly find vector representations that are similar to a given vector representation. This can be used to improve the performance of search applications, such as search engines and recommender systems.

- More Accurate Search: Vector databases can be used to find vector representations that are more semantically similar to a given vector representation. This can be used to improve the accuracy of search applications, such as search engines and recommender systems.

- More Flexible Search: Vector databases can be used to perform a variety of search queries, such as nearest neighbor search, range search, and keyword search. This makes vector databases more flexible than traditional relational databases.

Conclusion

Vector databases are a powerful new technology for storing and querying vector representations of data. Vector databases can be used to improve the performance and accuracy of a variety of applications, such as search engines, recommender systems, and machine learning applications.

]]>